2026 Data Fusion Contest

SAR Temporal Storytelling - Large-Scale Analysis of Temporal InSAR Stacks

The Contest: Goals and Organization

Rapid advances in spaceborne Synthetic Aperture Radar (SAR) have transformed Earth Observation, enabling global, all-weather, day-night monitoring with ever-increasing temporal density. While optical imagery provides intuitive visual detail, it is constrained by cloud cover and illumination. SAR offers consistent measurements regardless of weather or lighting, making it uniquely suited for tracking subtle surface changes, dynamic processes, and rapid environmental events.

Recent advances in commercial SAR satellite systems have transformed how such imagery is used. These constellations are able to provide sub-meter imagery with flexible tasking and rapid revisit. However, whereas traditional large SAR systems are optimized for repeat-pass geometries and consistent collection, commercial SAR systems prioritize task-based, event-driven acquisitions, leading to greater variability in acquisition geometry across temporal stacks.

These temporal stacks may be densely sampled, but typically exhibit substantial variation in imaging mode, incidence angle, look direction, and orbital geometry – necessitating an evolution in how temporal monitoring problems are approached. While this geometric variability complicates temporal analysis it also introduces new opportunities. The increased temporal density and diversity of observations provide richer information density that can be exploited by modern analytical methods.

The 2026 IEEE GRSS Data Fusion Contest, organized by the Image Analysis and Data Fusion (IADF) Technical Committee of GRSS and Capella Space aims to foster the development of innovative solutions for the real operational challenges encountered in the exploitation of commercial high-resolution SAR data.

To this end, the contest dataset consists of densely sampled, InSAR compatible temporal stacks of very high-resolution X-band SAR imagery acquired across a wide range of imaging modes and viewing geometries. For the first time, a dense temporal stack of high-resolution InSAR-compatible SAR data from a commercial constellation is being made available at scale, providing a unique testbed for methods that exploit temporal richness while remaining robust to acquisition diversity.

Participants will submit a short description outlining their method, key insights, and results obtained using the contest dataset. The aim is to encourage creative use of globally distributed InSAR time series and to showcase techniques that can advance our ability to interpret and exploit long-term SAR interferometric observations.

Scientific papers describing the best entries will be included in the Technical Program of IGARSS 2026, presented in an invited session “IEEE GRSS Data Fusion Contest,” and published in the IGARSS 2026 Proceedings. Furthermore, organizers and contest winners will co-author a scientific article in IEEE JSTARS describing the winning approaches in greater detail.

Competition Phases

The contest consists of a single phase in which participants receive the released dataset and are free to develop, explore, and evaluate their methods. Contestants may use the provided data to design and test any analytical or visualization approach they consider relevant. Once their method is ready, participants submit a short description outlining their idea, methodology, and results obtained with the provided data. After the submission deadline, the organizers will review all entries and select the winners based on the quality, originality, and usefulness of the proposed approaches (see below). After evaluation of the results, four winners are announced. Following this, they will receive feedback to write their final manuscript, which will be included in the IGARSS 2026 proceedings. Manuscripts are 4-page IEEE-style formatted (references do not count towards the page limit). Each manuscript describes the addressed problem, the proposed method, and the experimental results.

Calendar

- February 04: Contest opening: release of data

The evaluation committee starts to accept submissions. - April 06: The evaluation committee stops to accept submissions.

- April 13: Winner announcement

- April 20: Internal deadline for papers, DFC Committee review process

- April 28: Submission deadline of final papers to be published in the IGARSS 2026 proceedings

- August 9-14: Presentation at DFC-dedicated IGARSS 2026 Session

The Data

Dataset Overview

This dataset consists of multiple densely sampled temporal stacks of very high-resolution X-band Synthetic Aperture Radar (SAR) imagery collected by the Capella Space satellite constellation. The data are intended to support advanced research in time-series analysis, change detection, object/activity understanding, and machine-learning-based SAR analytics, with a particular emphasis on robustness and generalization. The dataset spans a wide range of imaging geometries, acquisition modes, and observation conditions to reflect real-world operational diversity rather than a synthetic benchmark.

A core motivation behind this dataset design is to encourage the development of robust, generalizable algorithms, particularly for participants using deep neural networks (DNNs) or other data-driven techniques.

Analysis Possibilities

This dataset supports a broad range of exploratory analyses enabled by dense, high-resolution temporal sampling and diverse acquisition geometry. SLC products can be paired to form InSAR-compatible temporal stacks for interferometric change analysis and time-series deformation studies where repeatability permits. The inclusion of long-dwell, high-resolution spotlight-style collections also enables investigation of single-image 3D retrieval approaches (e.g., exploiting aperture diversity and geometric priors). More broadly, the multi-temporal stacks provide a foundation for pattern-of-life and activity characterization, allowing participants to study persistent change, episodic events, and evolving scene dynamics over time.

Participants are encouraged to pursue creative uses of the dataset and to incorporate geographic context – such as land cover, infrastructure, and environmental setting – when developing and interpreting their analyses.

Data Products and Metadata

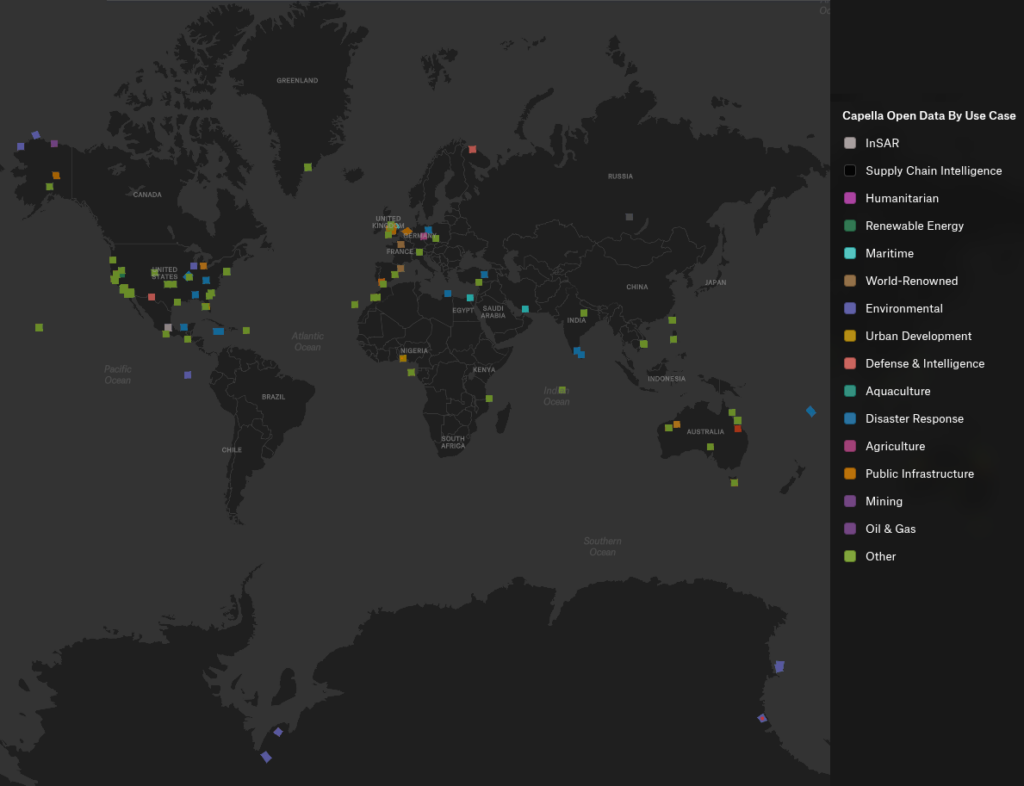

Approximately 1582 unique collects are provided in coordinated stacks collected over a year, with the possibility of creating 17000+ possible pairs! Stacks over some AOIs are deep with over 50 images collected over the course of the year. A geographic distribution of the images are shown in Figure 1. This geographic diversity benefits machine-learning approaches by encouraging more generalizable, location-agnostic representations.

The modes represented in the data set and illustrated in Figure 2 are (more details about collection modes can be found here):

- Spotlight mode provides the highest spatial resolution by continuously steering the antenna to dwell on a fixed ground location, enabling detailed characterization of fine-scale structures at the expense of scene extent.

- Sliding Spotlight (Spotlight Wide) mode extends scene coverage by gradually steering the antenna along-track during collection, offering a balance between high spatial resolution and increased swath width.

- Stripmap mode images the surface with a fixed antenna pointing as the spacecraft moves along its orbit, producing wider-area coverage with consistent geometry and moderate spatial resolution suitable for large-scale monitoring.

The accompanying CSV file contains more details per scene. The headers are as follows:

- collect_id_ref: Unique identifier for the primary (reference) SAR acquisition in a paired or stacked comparison.

- collect_id_sec: Unique identifier for the matched secondary SAR acquisition corresponding to the reference collect.

- start_time_ref: Acquisition start time of the reference collect, expressed in UTC.

- start_time_sec: Acquisition start time of the secondary collect, expressed in UTC.

- platform_ref: Identifier of the satellite platform that acquired the reference collect.

- platform_sec: Identifier of the satellite platform that acquired the secondary collect.

- polarization_ref: Transmit–receive polarization of the reference collect.

- polarization_sec: Transmit–receive polarization of the secondary collect.

- mode: SAR imaging mode used for the acquisition (e.g., Spotlight, Sliding Spotlight, Stripmap).

- look_direction: Side-looking geometry of the acquisition relative to the flight direction (left-looking or right-looking).

- flight_direction: Orbit direction during acquisition (ascending or descending).

- incidence_ref: Incidence angle of the reference collect at scene center, expressed in degrees.

- incidence_sec: Incidence angle of the secondary collect at scene center, expressed in degrees.

- dif_incidence: Absolute difference in incidence angle between the reference and secondary collects, expressed in degrees.

- delta_graze_deg: Difference in grazing angle between the reference and secondary collects, expressed in degrees.

- latitude: Latitude of the scene center or reference point, expressed in degrees.

- longitude: Longitude of the scene center or reference point, expressed in degrees.

- height: Height above the reference ellipsoid or mean sea level at the scene center, expressed in meters.

The dataset includes multiple SAR data product types to support a range of analysis approaches.

- Single Look Complex (SLC) products provide complex-valued SAR imagery preserving both amplitude and phase information, enabling interferometric processing techniques.

- Geocoded (GEO) products are image-domain representations projected onto a geographic coordinate system, facilitating visualization, mapping, and integration with other geospatial datasets.

- Complex Phase History Data (CPHD) products are provided for selected collections, preserving raw phase history measurements prior to image formation and enabling custom focusing, algorithm development, and experimental processing.

- Geocoded Ellipsoid Corrected (GEC) products provide geocoded imagery projected to a flattened ellipsoid to help exclude terrain artifacts.

Accessing the Data

The IEEE Data Fusion Contest 2026 dataset, provided by Capella Space, is distributed as open data through a public AWS S3 bucket. Users can access the collection via the published STAC catalog, browse it interactively through a provided felt map, or retrieve the data programmatically either as complete datasets or selected assets directly from S3 without authentication.

Submission and Evaluation

Participants submit a concise document describing their contribution. The submission should clearly outline the overarching idea or goal, the methods or techniques applied, and the results obtained using the provided dataset. Visualizations, examples, and figures should be included to illustrate findings or highlight important insights. Other material (animations, additional figures, videos, etc.) can be included as supplemental material. Submissions should focus on how the proposed approach leverages the large-scale temporal InSAR data and what value it brings to understanding, exploring, or analyzing such datasets.

The document should use the IGARSS 2026 fullpaper template. It must contain the name and institutional email address of all team members. Adding team members after submission is not possible. Every participant can only be part of one team.

All submissions will be reviewed by a committee. Each contribution will be assessed according to a set of criteria designed to reward technical rigor, creativity, clarity, and practical relevance. The committee will select the winning entries based on an overall evaluation across the following dimensions:

- Soundness and technical correctness: The method should be scientifically well-grounded, justified, and correctly implemented. Assumptions must be reasonable, and conclusions should follow from evidence.

- Originality and creativity: The approach should introduce novel ideas, insightful concepts, or innovative uses of the data. Creative problem framing or unconventional exploration is encouraged.

- Insightfulness: Submissions should demonstrate meaningful understanding of the data or reveal new perspectives, patterns, or phenomena. Insight can arise from methods, results, or interpretation.

- Usefulness and practical relevance: The method or findings should provide tangible value, e.g., improving analysis workflows, enabling new applications, or offering tools for interpretation and exploration.

- Effective use of the dataset: The approach should leverage the characteristics of the provided InSAR time series. Methods that make strong use of temporal information, spatial patterns, or global diversity will be viewed favorably.

- Computational efficiency or scalability: Since the dataset is large-scale, considerations of runtime or scalability could be included, though this is optional depending on contest goals.

- Clarity and quality of presentation: The submission should be well-structured, clearly written, and easy to understand. Claims must be supported by evidence or results.

- Quality of visuals and illustrations: Figures, plots, or visualizations should be meaningful, well-designed, and helpful for understanding the proposed approach or results.

- Reproducibility / transparency: Participants are required to make their code publicly accessible (e.g., via GitHub). The publicly shared implementation should be sufficiently clear and complete to make the described method understandable and potentially replicable, thereby strengthening fairness and scientific value.

Resources and Tutorials

Tutorials

- Access and Download

- Python notebook with examples on how to query and filter a STAC collection (as html)

- Scaling GEO Images in QGIS by Capella Space

- Opening and Exploring the Geo Images

Tools & Applications

- Python SDK for api.capellaspace.com by Capella Space

- Single Look Complex data reader for Capella SLC images – python module to convert Capella SLC data into an amplitude image. by Capella Space

Publications

- Analyzing LiDAR and SAR data with Capella Space and TileDB by Stavros Papadopoulos

- Open SAR data and scalable analytics by Norman Barker

- Radar Generalized Image Quality Equation Applied to Capella Open Dataset by Wade Schwartzkopf, Jason Brown, Gordon Farquharson, Craig Stringham, Michael Duersch, Jordan Heemskerk

Results, Awards, and Prizes

The first to fourth-ranked teams will be recognized as winners. To be eligible for the prize, teams must contribute to the community by sharing their code openly, such as on GitHub. The winning teams will:

- Present their approaches in a dedicated DFC26 session at IGARSS 2026

- Publish their manuscripts in the Proceedings of IGARSS 2026

- Receive IEEE Certificates of Recognition

- Be awarded during IGARSS 2026, Washington, USA in August 2026

- Co-author a journal paper summarizing the DFC26 outcomes, which will be submitted with open access to IEEE JSTARS. The costs for open-access publication will be supported by the GRSS.

The Rules of the Game

- The dataset can be openly downloaded from the Capella Space Open Data listing on AWS (see above).

- To enter the contest, participants must read and accept the Contest Terms and Conditions.

- The results should be submitted as a pdf via the corresponding submission page. Optional supplemental material (e.g. high-resolution imagery, animations, etc.) can be submitted by providing download links within the pdf document. (Note: The submission page is based on Google forms. Should the page not be available, please submit the pdf via email to dfc26@googlegroups.com including the following information in the mail body: Primary Contact Name (First Name Last Name), Primary contact’s complete affiliation (Name of University or Organization), Primary contact’s email, Country, IEEE Membership Number (Optional), Team Members List (List all team members including their Full Name, Affiliation, and Email. (Format: Name – Affiliation – Email)), Link to Source Code (e.g., GitHub), Confirmation that no member of this team is participating in another team for this year DFC, Confirmation that code must be shared openly (e.g., GitHub)).

- Ranking between the participants will be based on the criteria as described in the Submission and Evaluation Section.

- The committee accepts submissions from February 04, 2026 until April 06, 2026, 23:59 AoE.

- Each team needs to submit a short paper of max. 4 pages (excl. references) by April 06, 2026, clarifying the team members, describing the used approach, and showing results. The paper must follow the IGARSS 2026 paper template and should be submitted via the submission page (see above).

- For the winning teams, the internal deadline for full paper submission is April 20, 2026, 23:59 AoE. Final paper submission deadline is April 28, 2026.

- Important: Only team members explicitly stated on these documents will be considered for the next steps of the DFC, i.e., being eligible to be awarded as winners and joining the author list of the respective potential publications (IGARSS26 and JSTARS articles). Furthermore, no overlap among teams is allowed, i.e., one person can only be a member of one team. Adding more team members after submission of the description for evaluation is not possible.

- Persons directly involved in the organization of the contest, i.e., the (co-)chairs of IADF as well as the co-organizers are not allowed to enter the contest. Please note that IADF WG leads and co-leads can enter the contest. They have been excluded from relevant information concerning the content of the DFC to ensure fair competition.

Failure to follow any of these rules will automatically make the submission invalid, resulting in the manuscript not being evaluated and disqualification from the contest.

Participants in the Contest are requested not to submit an extended abstract about the approach used for the DFC to IGARSS 2026 by the corresponding conference deadline. Only contest winners (participants corresponding to the four best-ranking submissions) will submit a 4-page paper describing their approach to the Contest by April 20, 2026. The received manuscripts will be reviewed by the Award Committee of the Contest, and reviews sent to the winners. Winners will submit the 4-pages full paper to the Award Committee of the Contest by April 28, who will then take care of the submission to the IGARSS Data Fusion Contest Community Contributed Session, for inclusion in the IGARSS Technical Program and Proceedings.

For any questions, please contact the organizers at dfc26@googlegroups.com.

Acknowledgments

The IADF TC chairs would like to thank Capella Space for providing the data, and IEEE GRSS for continuously supporting the annual Data Fusion Contest.